In the breathtaking race unleashed by generative AI, there is an underlying reality that many researchers are beginning to observe in the development of artificial intelligence models.

Ancient philosophy is converging with science once again. Many people are unaware that philosophy and science once formed a single field of knowledge. Over time, historical circumstances separated their paths. Ancient philosophers observed nature and examined reality through logic. They built sophisticated frameworks of thought and generated hypotheses about the world in ways that are difficult for us to fully grasp today, reaching profound conclusions through pure reasoning, without swarm agents or big data.

The Platonic representation and AI

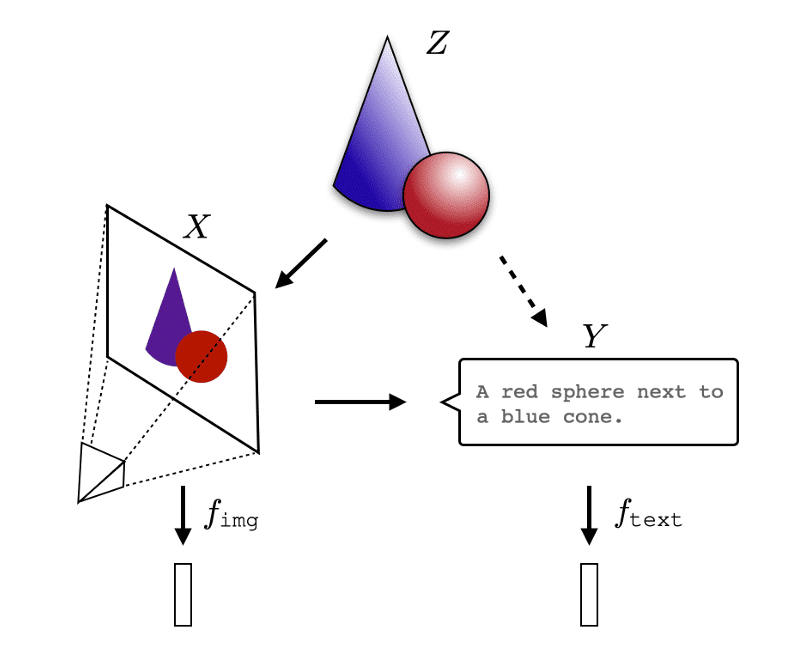

According to the 2024 paper “The Platonic Representation Hypothesis” researchers Huh, Cheung, Wang, and Isola propose something remarkable: AI models, even when trained in completely different ways, with different datasets, architectures, and objectives, appear to converge toward the same internal representation of reality.

Understanding a core idea

Imagine asking ten different people to draw a map of their city. Each person would use their own method and materials. But if everyone created their map with enough care and detail, the resulting maps would end up being equivalent, because they all represent the same underlying reality.

This is precisely what seems to be happening with AI models. As models grow larger and more capable, they increasingly converge toward very similar representations of the world.

The researchers compared vision models (trained exclusively on images) with language models (trained exclusively on text) and analyzed how they internally organize information. The result was striking: the larger and more capable a model becomes, the more its internal structure resembles that of other models—even if they were trained on completely different datasets and use entirely different architectures.

For example, a model trained only on text develops an internal representation of color that is remarkably similar to the representation learned by a model trained only on images. The models never “communicated,” yet they arrived at structurally equivalent conclusions.

The researchers propose several possible explanations. One key idea is that when models must perform well across many tasks, they tend to converge toward the same optimal solutions as their counterparts. Neural networks also exhibit a natural bias toward simplicity. Because of their mathematical nature, they tend to discover the simplest possible solution, and that simplest solution often reflects the underlying structure of the world itself.

And this is where philosophy enters the room like the elephant that has been waiting for centuries for everyone to notice it. The Platonic representation echoes Plato’s Allegory of the Cave. The images and text used to train models are like shadows cast by reality. Models learn from those shadows, namely data, and progressively recover the deeper structure underlying reality

Plato, Aristotle, and the quantum perspective

Plato distinguished between two levels of reality. The Forms (or Ideas) are perfect, eternal, and immutable. What we perceive through our senses are merely imperfect copies of those Forms. The Allegory of the Cave illustrates this: humans see only shadows projected on a wall, never reality itself.

What the paper suggests is that AI models, which are trained on millions of those shadows in the form of images, text, and sounds, may be algorithmically recovering something similar to the Forms: a deep and shared structure underlying all observable phenomena.

However, there is an important philosophical distinction. For Plato, the Forms are ontologically primary—they exist independently of any mind or perception. The perfect chair exists whether or not anyone thinks about it.

The hypothesis proposed in the paper is more modest and more scientific. The Platonic representation is essentially a statistical structure: a probabilistic model describing how events are distributed in the world. It is not pure metaphysics—it is mathematics. This perspective aligns more closely with scientific realism than with strict Platonism: the idea that science progressively converges toward increasingly accurate descriptions of reality.

Aristotle criticized Plato precisely for separating Forms from concrete things. For Aristotle, the form of a dog does not exist in a separate world. It exists within the dog itself, in its structure and organization.

In this sense, AI models seem closer to Aristotle’s view. They do not learn from an abstract realm of Ideas but from the structure embedded in concrete data. The representation emerges from the bottom up, from the copies, rather than descending from the ideal.

This also brings to mind Kant and the irreducible limit: we cannot access the world as it is in itself. We only access the world as it appears through the filters of our cognitive structure, our biology, and our sensory systems. All of science is built on top of those sensory biases.

AI models inherit those biases because they learn from data produced within the limits of human perception. The resulting synthesis can be extraordinarily powerful, but it may carry the same structural limitations, potentially amplified.

And then there is another twist: following this line of thought eventually leads to ideas like circular conditioning, quantum theory, and the fact that reality cannot be interpreted without a prior framework, but that framework itself is built from previous interpretations.

We never touch underlying reality directly; we only interact with sensations of it. This is the hermeneutic circle. If we follow this logic, the observing subject modifies the object, and the object modifies the subject when it is observed.

The system that models reality is itself part of the reality it models.

The “Platonic representation,” or what might be termed an “Aristotelian representation,” toward which AI models converge may not describe reality from the outside. It may instead reflect the best description reality can produce of itself from within.

Beyond scientific silos

In order for science to advance and encompass reality, it became increasingly specialized. Knowledge was divided into highly technical fields that allowed humanity, given the historical context, to make extraordinary progress. Figures like Aristotle or Leonardo da Vinci could span multiple domains, but modern scientific progress made that breadth impossible.

Knowledge was thus divided into disciplines: physics, chemistry, biology, psychology, economics, and philosophy. Each discipline developed its own language, methods, journals, and conferences. The cost was significant. Problems that are fundamentally unified were fragmented into separate domains that rarely communicate with one another. Consciousness, for example, is simultaneously a problem in neuroscience, physics, philosophy, and computation, yet each field largely studies it in isolation.

Artificial intelligence has the potential to change this. If AI models represent unified structures of knowledge, these silos could begin to disappear. We may become capable of connecting domains of knowledge and detecting patterns in ways that would otherwise be impossible. Not because humans lack intelligence, but because AI does not face the same institutional, cognitive, and biological barriers that we do.

This is already happening in small but meaningful ways. For example, AlphaFold, an advanced artificial intelligence system developed by DeepMind, has connected physics, chemistry, and biology in ways no single specialist had achieved before. Large language models are uncovering connections between academic papers from different fields that have gone decades without citing each other. Advances in this direction, particularly at Google DeepMind, are especially notable.

There is something melancholic about this realization. Several intellectual traditions attempted to achieve exactly this and were ultimately marginalized: the Renaissance polymaths; German Naturphilosophie, which sought a unified science; Diderot’s Encyclopédie project; twentieth-century structuralism, which searched for shared patterns across linguistics, anthropology, and psychology; and Leibniz’s vision of a universal language of thought.

All of them failed, in part because the necessary tools did not exist. The number of possible connections between domains far exceeds human cognitive capacity. Perhaps they simply arrived too early.

An open question for AI and philosophy

Is there a single underlying reality, or multiple ones? Is one more real than the others?

These are not rhetorical questions. They may become the next serious challenge for both philosophy and AI research. The convergence of models suggests that a unified structure may exist. However, if our data is shaped by our biology, how much of that convergence reflects reality, and how much reflects the projection of our own structure onto the world? Could synthetic data reshape that representation?

Could AI eventually surpass our representation of reality and construct its own?

An extraordinary moment to be alive

What makes this moment truly remarkable is that ideas that remained philosophical speculation for centuries are now becoming empirically testable hypotheses. And early evidence suggests that something along these lines may indeed exist.

AI does not solve the philosophical problem. But for the first time, it provides a tool operating at the scale required to seriously attempt it—not as speculative metaphysics, but as an empirical and mathematical research program.

Specialization allowed us to move quickly by breaking knowledge into manageable parts. Integration, which is now potentially enabled by AI, may allow us to reconnect those parts and reveal the deeper structure that has always linked them.

Connecting the dots is, in the end, what humanity has always sought to do. The difference is that we may finally have a tool capable of matching the scale of the problem.

Integration.

Comments (0)

Your email address is only used by Business & Decision, the controller, to process your request and to send any Business & Decision communication related to your request only. Learn more about managing your data and your rights.