Knowing that AI sovereignty is necessary is one thing. Knowing how to design it is another. Following our previous article on why organizations should consider sovereign architectures for AI, we now take a closer look at the key principles and mechanisms needed to design an effective sovereign AI architecture.

Turning the principle into an operational architecture

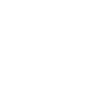

The MAGS-SLH Sovereign pattern provides a concrete framework for orchestrating AI agents while maintaining control, security and auditability.

Its design is built on a number of key principles: modularity, systematic human oversight, decentralized cooperation without raw data sharing, and secure execution mechanisms. In this article, the goal is to explain these principles, the components that support them, the operational flow behind a critical action, the best practices for deployment and the indicators that help measure value.

There are also complementary approaches such as Federated Learning, Swarm Learning, sovereign clouds and open source MLOps platforms. MAGS-SLH is designed to integrate them and complement them when they bring real operational value.

Design principles to follow

The design principles can be summarized in a simple but effective triad.

- First, full ownership and control over components, frameworks and infrastructure. This ensures both strategic and operational autonomy.

- Second, sensitive data must remain within controlled environments that comply with European regulations, ensuring data sovereignty.

- Third, organizations should reduce dependency on foreign vendors by prioritizing European or open source solutions.

Compliance, security, control over components and data ownership must be built into the design from the start. This means ensuring where data is processed, maintaining technological independence and embedding mechanisms such as traceability, impact simulation and human validation in the process. Together, these elements support strategic sovereignty.

Modularity is another key principle. Each component, such as the orchestrator, agents, logging system or sandbox, should be able to evolve, scale or be replaced independently. Human governance is also essential. The HACI interface, Human-in-the-Loop Arbitrated Cognitive Interface, must give operators clear visibility so they can understand, adjust or reject sensitive decisions. Finally, active resilience ensures that the system can detect a failing agent and automatically regenerate it, maintaining continuity while preserving security.

Why consider sovereign architectures for AI?

See moreCore components and their role

The core components form the operational backbone of the architecture.

- First, organizations must maintain ownership of key components by prioritizing European or open source solutions. This helps ensure technological independence. Ownership should cover all elements of the system, including data management, infrastructure and frameworks, so that organizations retain full strategic autonomy and control over future developments.

- Second, data must remain within controlled environments, with clear governance over access and transfers, in line with European regulations.

The Core Engine acts as the central orchestration layer, coordinating missions and decision flows while managing overall operations. It interacts with the Crew Agent Manager, which is responsible for handling groups of agents, including their creation, supervision and lifecycle. The Crew Agent Manager instantiates specialized ephemeral AI agents designed to run for a specific task and then be removed once it is completed. This approach reduces the attack surface and enables automatic regeneration when needed. These agents can analyze logs, simulate the impact of changes and detect patterns or anomalies.

The AI Advisor provides reasoned recommendations, which are presented through the HACI interface, the Human-in-the-Loop Arbitrated Cognitive Interface. This interface displays the recommendations and the results of impact simulations so that an operator can review and validate them. Execution security relies on sandboxes, isolated environments that contain software processes, and on Trusted Execution Environments, which guarantee the integrity of code and data during critical operations.

Traceability is ensured through an immutable eIDAS-compliant ledger, providing timestamped and signed records that can serve as reliable audit evidence. For cooperation between sites, anonymized and signed signals called “pheromones” help guide actions without sharing raw data. Finally, the Proof of Quality mechanism adds a distributed validation step to check the quality of a decision before it is executed.

Detailed MAGS-SLH Sovereign architecture

Orchestration components, simulation and RAG modules, agent management through the Crew Agent Manager, secure execution with sandboxes and Trusted Execution Environments, an immutable eIDAS-compliant ledger, and coordination mechanisms such as pheromones and Proof of Quality.

To view the diagram in a larger format, open it in a new tab😉

The process behind a critical action, step by step

The process behind a critical action is designed to remain clear and controlled. When an anomaly is detected or when a business need requires a sensitive action, the Core Engine triggers an alert and assembles a team of agents. These agents perform local analyses and publish encrypted signals, called pheromones, which enable decentralized correlation.

Before any action is executed, a digital twin simulates the potential impact of the different options. The AI Advisor then combines these elements and proposes explained scenarios using LLM and RAG technologies to provide updated context. The decision then goes through the Proof of Quality mechanism and the HACI interface for human validation.

If the action is approved, it is executed within a Trusted Execution Environment. Each step is timestamped, signed and recorded in the immutable ledger. This process ensures that every critical decision is prepared, simulated, explained, validated and fully traceable.

Quelles sont les bonnes pratiques et comment le déployer ?

To deploy a sovereign AI architecture without entering unknown technical territory, best practices suggest starting with targeted pilots focused on high-value and high-risk use cases. Examples include multi-entity fraud detection, monitoring sensitive patient data environments or managing critical points in network operations.

These pilots help test the HACI interface, evaluate acceptable latency across the simulation, validation and execution cycle, and assess the robustness of Trusted Execution Environments. The next step is to industrialize the core components such as the Core Engine, the Crew Manager and eIDAS-compliant logging, while gradually introducing interoperability between sites through pheromone and Proof of Quality mechanisms. From an operational perspective, full human supervision should be reserved for truly sensitive actions. For low-risk routine operations, automated trust rules can prevent unnecessary workload. A RAG strategy also helps keep LLM outputs relevant by relying on updated contextual knowledge.

A key recommendation is to prioritize open source components during the proof of concept phase, as they provide transparency and reversibility. Support contracts or commercial versions should only be introduced once functional and compliance guarantees have been validated.

Which indicators can be used to manage and demonstrate value?

Les indicateurs qui permettent de piloter et de démontrer la valeur ne trompent pas : il faut lier sécurité, conformité et performance opérationnelle. Le temps moyen de réparation (MTTR) des anomalies critiques, le pourcentage de décisions sensibles validées humainement, la capacité à produire des rapports eIDAS sans réserve et le taux d’incidents non détectés constituent des mesures essentielles. Ces KPI servent à démontrer la valeur opérationnelle et à rassurer métiers et régulateurs sur l’efficacité du dispositif.

Quels sont les risques techniques et quelles sont les mesures pour les maitriser ?

Architecture value cannot be measured by metrics alone. While indicators remain essential, they are not sufficient to fully map technical risks. These risks require precise and operational safeguards. This includes a Zero Trust approach to isolate each component and secure communication channels, redundancy of Trusted Execution Environments and continuous monitoring of vulnerabilities, and controlled cognitive load through recommendation engines and a well-designed HACI interface. It also requires proactive management of model obsolescence through regular rotation and retraining, as well as mechanisms to detect prompt injections and behavioral anomalies, supported by immutable logging that allows any attempted alteration to be reconstructed if needed.

It is essential to ensure that data remains within controlled environments, in line with European regulations, and to prioritize proprietary or open source components to maintain technological independence. This controlled data localization allows organizations to retain full control over sensitive information, strengthening sovereignty and enabling them to respond more effectively to regulatory changes or crisis situations.

Operational implementation: a concise roadmap

Operational implementation follows a concise and pragmatic roadmap. It starts with diagnosing and mapping sensitive areas, launching a proof of concept on a priority use case, conducting penetration tests and simulation exercises, and then industrializing modularity and interoperability. Deployment can then gradually expand across multiple sites, supported by continuous governance with KPI monitoring and regular audits.

The diagnostic phase should also assess ownership of components, data localization and dependency on foreign vendors to ensure the architecture is truly sovereign. When carried out with a structured approach, this process helps balance speed of deployment with long-term resilience.

From the proof of concept stage, define interoperability rules and metrics such as SLO/SLA, MTTR and the percentage of human-validated actions. These indicators help measure maturity and make it easier to integrate with sovereign clouds or partner environments.

Ultimately, a pragmatic engineering approach to this strategic challenge means designing a sovereign architecture that combines dynamic agent generation, decentralized cooperation without sharing raw data, distributed validation and certified traceability. When deployed through an iterative and controlled approach, it transforms AI from a regulatory risk into a reliable, auditable and sovereign operational asset.

Many existing patterns and projects such as Federated Learning, sovereign clouds, open source MLOps platforms, Trusted Execution Environments and distributed ledgers already provide valuable building blocks. MAGS-SLH is designed to work with these solutions and fill operational and regulatory gaps, including end-to-end eIDAS logging, systematic HACI oversight and the Proof of Quality mechanism, rather than replacing them entirely.

Comments (0)

Your email address is only used by Business & Decision, the controller, to process your request and to send any Business & Decision communication related to your request only. Learn more about managing your data and your rights.