68 percent of employees use ChatGPT at work without their employer’s knowledge. That means more than two out of three companies are unintentionally sharing personal or confidential data with a public, unsecured application. This alarming reality raises important questions about the ethical and legal implications of generative AI, and more broadly, about what it means to build trustworthy AI. What risks does uncontrolled use of generative AI pose for businesses? This article offers a few answers.

The ethics of AI go far beyond the walls of any single organisation and touch on major societal issues such as the risks of disinformation, the homogenisation of thought, the social impact of click work, and the environmental toll. While these topics are central to the debate around the rise of generative AI, it would be impossible to address them all here. This section will therefore focus specifically on the ethical considerations businesses need to take into account when deploying generative AI applications.

What is AI ethics?

The term “ethics” comes directly from philosophy. According to the definition given by the Académie française, it refers to:

- A reflection on human behaviour and the values that underpin it, carried out with the aim of establishing a doctrine or a science of morality.

- A set of moral principles that apply to individuals engaged in the same profession or activity.

However, because ethics is inherently linked to the specific context in which it is applied : culture, profession, field of activity, its definition is never absolute. Ethics is not a fixed set of rules, but a framework for questioning, guiding decisions, and ensuring accountability. In the case of artificial intelligence, ethics means critically assessing the purpose, impact, and limitations of these technologies to ensure their use aligns with both legal norms and human values.

This is precisely what makes the topic so complex and deeply philosophical.

When it comes to applying AI in the workplace, ethical questions must be approached through the lens of acceptability. Where is it acceptable to deploy AI for a given group of users? Under what conditions can its use be considered acceptable? What boundaries and safeguards need to be in place to ensure its acceptability? These are all essential questions to ask, always in relation to the specific group of users or individuals exposed to the AI application.

Why should we ask ourselves this question of trustworthy AI?

The French are among the most sceptical when it comes to artificial intelligence:

- Only 31% say they trust it, far behind countries like India where that figure reaches 75%

- 53% believe AI poses a significant risk to data security

- Half consider it a major issue for copyright and intellectual property rights

Trust in AI, and by extension in generative AI, is increasingly diluted by crucial questions of ethics and legal responsibility, mixed with irrational fears fuelled by science fiction and media outlets that thrive on sensational but ultimately unreliable headlines.

68% of French people are concerned about the rise of generative AI.

Source: “Les Français et les IA génératives”, Ifop for Talan, May 2023

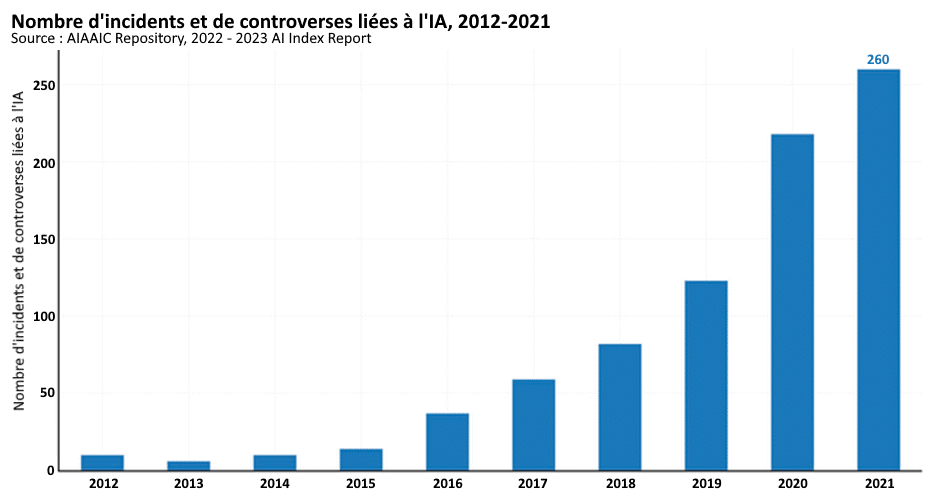

While it is important to focus on the issues that truly matter, we are seeing a sharp increase in incidents involving unethical uses of AI. According to the global AIAAIC database, which tracks such cases worldwide, the number of AI-related incidents and controversies grew 26-fold between 2012 and 2021 – from just 10 to 260 reported cases. These include numerous deepfakes, as well as bias embedded in training data.

With risks becoming increasingly numerous and tangible for the individuals affected, companies must take the issue seriously and make it a core part of any generative AI initiative.

The AI Act: the European foundation for trustworthy AI

ust like the General Data Protection Regulation (GDPR), Europe aims to take the lead in regulating the use of AI. For several years now, it has been working on an ambitious regulation project that was approved by the European Parliament on 14 June 2023, paving the way for a legal framework to govern AI usage.

Under this regulation, companies will be required to classify all their AI use cases according to their level of ethical risk:

- Minimal

- Limited

- High

- Unacceptable

With this regulation, Europe is drawing a clear line when it comes to AI applications with significant social impact, setting itself apart from regions such as China, which heavily relies on facial recognition or social scoring systems.

Depending on the risk level assessed for each AI application, companies will need to implement appropriate risk mitigation measures. Compliance with the AI Act can begin right away, starting with a full inventory and classification of AI use cases across the organisation. The principles of ethics-by-design should be embedded from the start to ensure that ethical concerns are addressed at every stage of an AI project. These principles are also at the core of our own methodology for building and deploying AI.

The three major pitfalls of AI

Due to its underlying machine learning mechanisms, AI inherently raises several challenges. While these issues don’t always have straightforward solutions, they must be acknowledged and assessed in order to promote trustworthy algorithms.

The challenge of fairness

Training data inevitably comes with the risk of bias, especially given the massive volume of data required to train generative AI models. The list of websites used to train ChatGPT, for example, clearly highlights a lack of cultural diversity and representativeness in terms of populations and schools of thought. One telling case comes from the US start-up Textio, which identified multiple social biases (related to age, gender or ethnicity) when testing ChatGPT on 167 job posting drafts.

Algorithmic bias is therefore closely linked to a lack of diversity and inclusion in the datasets used. Building trustworthy AI requires rigorous statistical testing of training data. It also calls for greater diversity and inclusion in the tech ecosystem, by widening the range of AI developer profiles in terms of gender balance, cultural backgrounds, educational paths and social inclusion.

The challenge of transparency

When it comes to transparency, the first essential rule is simple. Never create confusion about who is speaking in a human-AI interaction. Users must always be clearly informed when they are interacting with an AI. This duty of disclosure is fundamental and forms the basis of usage transparency.

Another issue is the lack of explainability behind AI-generated decisions and content. These systems are built on transformer-based models with large, complex neural networks, which makes them inherently opaque and makes it difficult to detect hallucinations, meaning false responses that appear highly convincing.

This is why companies need to be able to explain how and why an AI system reaches its conclusions. One way to do this is by indicating which sources of information were used to generate a given response. This concept of AI explain ability is critical to meeting ethical standards and is an essential part of transparent AI practices.

The challenge of accountability

The company remains fully responsible for the AI it deploys, including from a legal standpoint, and therefore for the decisions made by its AI systems. It assumes the risk of amplifying an ethical issue due to AI’s high automation capabilities. That is why the earlier ethical considerations are integrated into the development process, the more the risks can be mitigated.

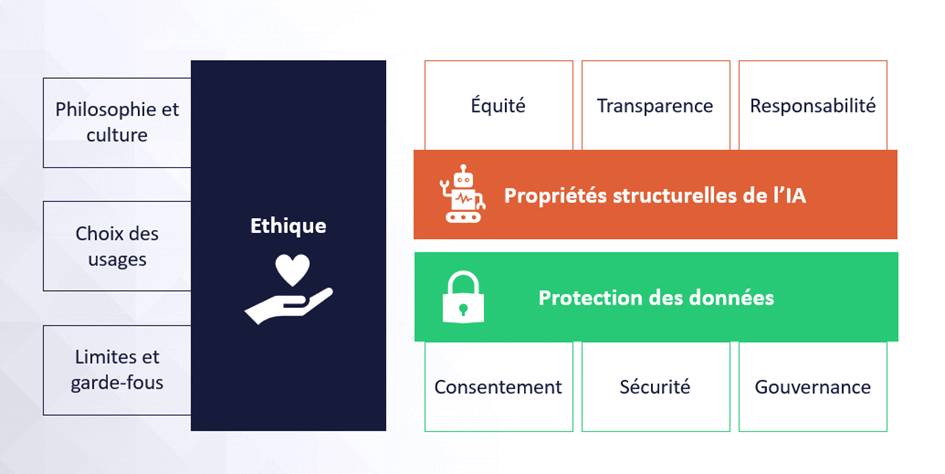

As we can see, the topic of AI ethics spans multiple areas. The foundation is data protection and, at a minimum, compliance with the GDPR. Without the protection of personal data, it is impossible to claim the development of trustworthy AI. Then come the structural properties of AI, particularly fairness and transparency, which must be addressed by the data scientists building the algorithms. Finally, ethics is, at its core, a philosophical matter. Each company must therefore determine its own fields of application, define appropriate use cases, and establish clear limits and safeguards.

The environmental impact of AI

The environmental impact of AI is another critical issue to consider when aiming to develop responsible and sustainable systems. Training and running generative AI models require substantial computing power and data storage capacity, both of which contribute significantly to environmental degradation.

This is why it is crucial to rely on pre-trained algorithms (GPT, for example, stands for Generative Pre-trained Transformers) and adapt them to the company’s specific use cases, using techniques like transfer learning. Doing so avoids the need to retrain models from scratch on massive datasets for every single use case. Instead, the environmental cost of training large-scale generic models like GPT or Bard is spread across millions of use cases worldwide.

In parallel, research is ongoing into new algorithmic approaches that aim to deliver similar performance with significantly lower environmental impact. These emerging methods offer a path toward AI systems that are both powerful and eco-responsible.

Frugal AI and eco-design of AI algorithms

The concept of frugality was introduced in 2019 through a call from researchers at the Allen Institute for AI, advocating for frugal or green AI. This means developing AI that is more efficient, more inclusive, and less demanding in terms of data and energy consumption, which is directly linked to computing power. The massive volume of data required to train generative AI models like ChatGPT significantly increases the carbon footprint of digital technologies. Companies must therefore integrate frugality into their processes to ensure they are deploying AI in a more sustainable and responsible way.

At Orange Business, we apply eco-design principles throughout our methodology.

💡 What concrete actions can be taken?

Any generative AI initiative must therefore take into account a wide range of issues related to trust, security and ethics. Whether to comply with the AI Act regulation or to ensure the most responsible use, all of these questions must be placed at the heart of your AI deployment strategy.

Here are 12 concrete actions to prioritise for rolling out trustworthy AI in your organisation.

6 actions data scientists and AI professionals can implement:

- Embed ethics-by-design principles from the start of every AI project

- Profile training data to ensure it is representative and inclusive

- Test and validate algorithms against clear ethical criteria

- Conduct regular audits of AI systems in production from an ethical standpoint

- Embrace algorithmic sobriety and apply frugal AI principles

- Commit to a code of ethics such as the Data Scientist’s Hippocratic Oath

6 actions that must be carried out by the company:

- Comply with the GDPR and the AI Act

- Appoint a Chief Ethics Officer

- Establish a Trust and Ethics Charter

- Raise awareness about the challenges and risks of AI

- Be transparent about how AI is used with users

- Maintain the ability to take back control from AI

Comments (0)

Your email address is only used by Business & Decision, the controller, to process your request and to send any Business & Decision communication related to your request only. Learn more about managing your data and your rights.